Sujay P

Data Architect

Habilidades

Conheça meus serviços

Portfólio

Experiência profissional

Data Architect

Culinary Digital

Jan 2026 - Mar 2026 • 2 mos

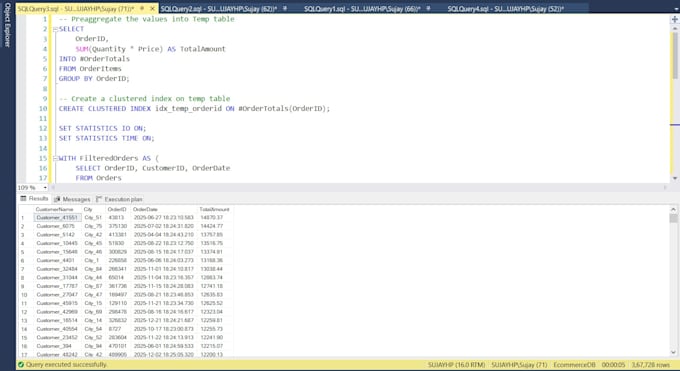

Culinary Suite is a SQL Server-based data platform designed for large-scale workloads, focusing on performance optimization, ETL efficiency, and scalable data architecture, with support for Agile delivery and seamless client onboarding. Technologies: Microsoft SQL Server 2019, SSMS, SQL Profiler Role & Responsibilities: Played a key role as Data Architect in – - Optimized complex SQL Server queries, stored procedures, and execution plans for high-volume transactional and analytical workloads, significantly improving system performance and query response times. - Analyzed and resolved performance bottlenecks using indexing strategies, execution plan tuning, and query refactoring across large-scale enterprise databases. - Improved performance of large and complex stored procedures (including multi-thousand-line codebases), reducing execution time and enhancing data processing efficiency. - Led client onboarding activities by setting up application environments and configuring SQL Server databases for new customers. - Designed and deployed database schemas, configurations, and initial data setups to enable seamless client onboarding and system readiness. - Collaborated with implementation and support teams to ensure smooth deployment, data validation, and post-deployment performance stability - Actively participated in sprint planning, code reviews, and production support, ensuring timely delivery of high-quality data solutions.

Supraj Solutions LLP

Autônomo • 10 yrs 6 mos

Data Architect

Sep 2020 - Dec 2025 • 5 yrs 3 mos

Data Engineering Tool (DET): DET is a Data Engineering Tool that is under development and yet to be available in the market. It works on the principle of decoupled configuration and medallion architecture. Currently it supports data extraction from known databases viz. MySQL, PostgreSQL, and ClickHouse as well as supports known file formats – CSV, and JSON. Between layers the tool supports transformations like truncate load, incremental load, SCD-1, SCD-2 with pre/post transformation, etc. can be performed. Technology: Microsoft Azure, Apache Airflow, Apache Pyspark, PostgreSQL, MySQL, Snowflake Role and Responsibilities: Played a key role as Data Architect in – - Designing HLD and LLD for the product - Define, create, and implement scalable cloud-based data architectures on Azure to meet business requirements and performance benchmarks - Develop and manage ETL/ELT pipelines and orchestration flows utilizing Airflow DAGs, ensuring robust scheduling, error handling, and dependency management - Leverage PySpark for designing and optimizing large-scale data transformation jobs, enabling schema mapping, cleansing, and aggregation of data from diverse sources - Establish and document data models, enforce data quality standards, and implement governance practices to assure integrity, compliance, and security - Oversee resource allocation, monitor system performance, and optimize compute/storage to enhance operational efficiency and control cloud costs - Partner with cross-functional teams—data engineers, analysts, business stakeholders—to align data solutions with strategic goals, mentor team members, and drive technology adoption - Create comprehensive documentation for data flows and architectures, and identify avenues for continuous enhancement of data engineering solutions

Data Architect

Sep 2020 - Dec 2025 • 5 yrs 3 mos

As part of ongoing development of the Data Engineering Tool, there was a need to separate configuration database (used to store the pipeline configurations) from development/test data. Initially this config database was stored in MySQL DB which we migrated to PostgreSQL. Technology: Microsoft Azure, PostgreSQL, PGLoader, MySQL, MYSQL Dump Role and Responsibilities: Played a key role as Data Architect in – - Designed and implemented end-to-end migration strategy for a small-scale configuration database - Assessed and updated database schema elements, including tables, data types, constraints, and indexes - Developed and executed robust data export/import pipelines using tools such as `mysqldump`, `pgloader`, and native PostgreSQL utilities, validating data accuracy and completeness after migration. - Reengineered relational models to support PostgreSQL’s advanced constraint mechanisms, including foreign key enforcement and cascading actions. - Coordinated integration and acceptance testing to verify application compatibility, data consistency, and transaction reliability post-migration. - Produced detailed technical documentation, including migration scripts and architectural diagrams, to support knowledge transfer and ongoing database administration.